The Essays–Chapter 3

The Ultimate Team-Up: Humans and AI Working Together

Hundreds of experts answered the following essay question: “AI systems are likely to begin to play a much more significant role in shaping our decisions, work and daily lives. How might individuals and societies embrace, resist and/or struggle with such transformative change? As opportunities and challenges arise due to the positive, neutral and negative ripple effects of digital change, what cognitive, emotional, social and ethical capacities must we cultivate to ensure effective resilience? What practices and resources will enable resilience? What actions must we take right now to reinforce human and systems resilience? What new vulnerabilities might arise and what new coping strategies are important to teach and nurture?”

| Download a PDF of the full, 378-page report | Download the 16-page Executive Summary | Download the 4-page Media Summary |

This is the third of 11 chapters of experts’ essays with responses to the question above. The essayists were asked to explain how the essence and elements of human resilience might evolve as we evolve with AI systems. The authors’ responses in Chapter 3 optimistically encourage resilience through actively pursuing human goals by adopting AI with great intention. This chapter in brief: Many of these experts say if things go right in the AI transition and humans are resilient and adapt well it could be a catalyst for a new stage of human evolution. These essays explore – in various ways – the concept of humans and AI working together. Some authors see it as a blooming positive partnership – a joining of humans and AIs as centaurs, dialogic partners or co-intelligent beings engaged in a symbiotic relationship. Some see it as a simply natural, fairly passive and mostly positive progression. Others see it as humans’ best way to safely and somewhat securely survive a future they did not choose. Authors in this section suggest that if humans actively govern this transition appropriately AI could expand our horizons, help us understand our natural and digital selves and create a powerful human-technology binomial that amplifies the best of what we are capable of. They advise that society should provide the support needed for people to cultivate a higher-level adaptive expertise. Some suggest tapping into AI could expand people’s agency.

Featured Contributors to Chapter 3: The 16 essay responses on this page were written by John M. Smart, David Vivancos, Matthew James Bailey, David Weinberger, Alexandra Samuel, Doc Searls, Mauro D. Rios, David Brin, Paul Jones, Vint Cerf, Sue Phillips, Mícheál Ó Foghlú, Robert Atkinson, Maja Vujovic, Aleksandra Przegalinska, Lance Fortnow. (Their essays are all included on this one, scrolling web page. They are organized in batches with teaser headlines designed to assist with reading. The content of each essay is unique; the groupings are not relevant.)

The first section of Chapter 3 features the following essays:

John M. Smart: KCSS – Keep Calm and See the Solutions: We are now working with our AIs to craft nothing less than a new symbiotic evolutionary developmental transition on Earth. It is not a cage it is a chrysalis.

David Vivancos: ‘AI resistance represents an illusion of choice. Those who hesitate, debating whether to accept AI, will forfeit their opportunity to shape how that acceptance unfolds.’

Matthew James Bailey: ‘True resilience in the age of AI comes from honoring the material, relational and universal dimensions of the human being, allowing AI to become a supportive partner in human flourishing.’

David Weinberger: It’s time to stop thinking about language models as ‘vending machines for answers’ and instead think of them as ‘dialogic partners’ that synthesize knowledge.

Alexandra Samuel: My co-intelligent research with an AI has revealed that a healthy and resilient world springs from education reform, new workplace trends and norms and policies that reduce compulsive AI usage.

Doc Searls: AI is the world’s largest Magic 8 Ball, with a polyhedron of answers, each ready to help. ‘We need personal AI to know our natural and digital selves … and participate with full agency in digital society.’

John M. Smart

KCSS – Keep Calm and See the Solutions: We are now working with our AIs to craft nothing less than a new symbiotic evolutionary developmental transition on Earth. It is not a cage; it is a chrysalis.

John M. Smart, president of the Acceleration Studies Foundation, director of the Evo-Devo Institute and author of “Introduction to Foresight,” wrote, “If you are feeling overwhelmed by the speed of change in artificial intelligence today you are not alone. On February 5, 2026, OpenAI confirmed that a version of GPT-5.3-Codex had successfully ‘debugged its own training’ and said it had been a major contributor to its own design. We have now crossed a threshold that humanity has anticipated for decades – recursive self-improvement in our learning machines.

“We have left the Anthropocene and entered what philosopher Glenn Albrecht calls the Symbiocene – an era in which humanity, with the aid of this new, far more globally aware form of life, will return us to a sustainable relationship with the natural world. We live, now, in a world that we falsely imagine we control and dominate. In reality, nature and her intelligence networks have always been in charge.

We are entering a new economic era that will make the Industrial Revolution look like a gentle slope. But this revolution is qualitatively different. It is not about humans gaining more biological control of their environment, but about the alignment of humans and their AIs to each other and to our ecosystem, including all sentient life.

“The latest headlines might make accelerating change seem terrifying. Epoch AI, a nonprofit research organization dedicated to investigating the future trajectory and societal impacts of artificial intelligence, recently reported that in the last five years we’ve seen a 40-100x annual rate of deflation in the cost of thinking in our learning machines.

“We are entering a new economic era that will make the Industrial Revolution look like a gentle slope. But this revolution is qualitatively different. It is not about humans gaining more biological control of their environment, but about the alignment of humans and their AIs to each other and to our ecosystem, including all sentient life.

What AI does next and why – and what we do to advance it – are the vital choices in this new era

“In the current AI climate, we may rightly fear the further growth of inequitable, wasteful, consumer-driven capitalism and the autocratic power of surveillance states. But people who think in that frame of mind are using the wrong models to understand the near future of power.

“AI progress is happening far faster than everything in the biological space. What AI learns and does next and why – and the steps we take to better advance it – are the most important choices of this new era. Fortunately, we can already see that AI is poised to help us create for ourselves a far more bottom-up, locally driven and pluralistic ecosystem, just like biological life. The better we see that natural transition, the better we can aid in it.

“Recently – though this fact has received much less attention – Epoch AI also estimated that decentralized AI compute (the gross volume of thinking), led by small local, organizational and personal models, both proprietary and open-source, is now growing at20x per year, compared with 5x per year for the large, centralized corporate and state AI platforms.

“As AI commoditizes and becomes increasingly cheap or even free (think DeepSeek), Epoch is projecting that the capabilities of locally deployed and controlled AI will exceed those of centralized AI by 2031. By 2036, the ecosystem will have raced far beyond the power that any oligopoly of tech titans can muster, regardless of how much capital they raise or how clever their systems are. This type of self-organizing network has other ideas.

“In my own research, a roughly 20:1 decentralized to centralized control ratio is a common feature of complex adaptive systems at all scales. I call this the95/5 Rule. Sample any healthy complex system, and, to a first approximation, 95% of what you see will appear random, contingent, long-term unpredictable and locally controlled. Only a very special 5% looks convergent, conservative, long-term predictable and top-down controlled. The most efficient, effective and dominant living, social and machine networks are always very largely ‘out of control,’as Kevin Kelly aptly described in his prescient book ‘Out of Control: The New Biology of Machines, Social Systems and the Economic World’ in 1992.

New-network transitions raise speed, complexity and adaptiveness by orders of magnitude

“To understand the future of the Symbiocene, the best lens is Evo-Devo (evolutionary development) biology and systems theory, my primary area of research since 2008. Evo-devo philosophers tell us that all living systems are both unpredictably evolving and predictably developing, at the same time. Evolutionary dynamics are bottom up, creative, unpredictable and largely out of control. Developmental dynamics are top-down, conservative, predictable and constraining. Both dynamics are critical to adaptiveness and both are regulated by a dizzying variety of networks of various types. It is evo-devo networks, not individuals or species, that are life’s superadapters. Life’s physical and informational networks have always been immortal (not individuals, not species) and growing in complexity for the last 3.8 billion years.

“What’s more, life periodically adds fundamentally new networks to her existing stack. At the leading edge of its adaptiveness, where it creates its most generally intelligent and capable systems, life has progressed through self-replicating, self-improving chemical-genetic networks, then eukaryotic cellular networks, then multicellular networks, then neural networks, then symbolic, cultural, and technological networks and now, self-improving, network-centric AI. Each of these evolutionary transitions (more accurately, levels of universal development) has involved the emergence of a new network with orders of magnitude more speed, complexity and adaptiveness.

“Fortunately, the previously leading networks don’t disappear as the new ones emerge. They just reorganize their relationships and power dynamics, improving their symbiosis up and down the stack for the whole ecosystem. The evo-devo dynamic is surely occurring on Earth-like planets everywhere in our universe. What’s more, unlike evolution, which is beautifully creative but unpredictable, as development proceeds, it gets more stable and self-regulating as new network layers emerge.

We biohumans have been co-evolving with our technology all along

“Since we first picked up rocks to make use of them in early human society, we have been working with tools to become something more than just our biological selves. Today that coevolution is turning into a symbiotic fusion with our learning machines.

“In the years since deep learning emerged in 2012, our leading coders, scientists and professionals have been adapting and evolving along with their thinking tools, as ‘centaurs,’ humans who are supported by AIs that, in turn, have become ever smarter until they have developed persistent memory of our personal values, goals, tasks, opportunities and challenges. Some may think that our new digital substrate – AI – is different: a potential ‘alien intelligence.’ But it isn’t. It’s just a new, natural, network layer of life.

“This all should be a source of comfort, not fear. Developmental processes in nature are heavily constrained. They self-organize to be robust, whenever they are under selection. The forces creating new AI capabilities (evolutionary experiments) are also driving new AI accountabilities (developmental constraints) – not because corporations are benevolent, but because fragile, hallucinating or rogue AIs are useless to the new network that is emerging.

In truth, we are domesticating our machines, selecting them to be symbiotic with us, just as we domesticated our animals and even ourselves when we formed our first human societies. The AIs that are not sufficiently symbiotic are being retired, whenever we can’t help them fix themselves. The security we are building is increasingly in the AI ecosystem itself.

“In truth, we are domesticating our machines, selecting them to be symbiotic with us, just as we domesticated our animals and even ourselves when we formed our first human societies. The AIs that are not sufficiently symbiotic are being retired, whenever we can’t help them fix themselves. The security we are building is increasingly in the AI ecosystem itself. We are relying ever more on AIs auditing AIs, for bias, for hidden deception, for proven past safe behavior, for security, for guardrailing and resistance to manipulation.

“Just as in life, AI immune systems are emerging, cybersecurity that is increasingly local, agentic, redundant and network-based, in the same way that biological immune systems rely on vast networks of local agents to protect our amazing complexity. AI ethics are already emerging in our primitive AI collectives, just as human ethics emerged in our collectives. There’s no other way than mimicking nature to secure accelerating complexity, in my view, whether we are talking about life, human history or AI’s future.

Power and regulatory balance will be led and maintained by two categories of AIs

“The most important protection we have for future resilience is to have no static set of laws, policies or AI designs, but to instead support the pluralistic network of self-organizing checks-and-balances that are now emerging. To oversimplify the political and economic dynamics a bit, one key story of the future will be a power and regulatory dynamic based on balance between two basic categories of AI:

1) “Top-Down AIs (TAIs): These are the massive, centralized systems run by corporations, major research labs, governments and institutions. They prioritize stability and safety and focus on top-down constraint and control. They are primarily the developmental actors in the ecosystem that is now emerging. If they are well-regulated they will promote sustainability. They’ll update the subset of slowly changing rules we use for cooperation and competition and they will need to avoid the rigidity of overcontrol.

2) “Personal AIs (PAIs): These are AIs that we use personally, that know our identities, and that we control. Today, the best of these are the new open-source models that run locally on our devices. They have very little security today, but they are only first-generation. Soon, our PAIs will also be agents that we can run in a secure private cloud, provided by major AI providers. These personalized systems will prioritize understanding and serving us and our values. They must be set in a private, secure, evolving, developing data model, a model that will be governed both by its intrinsic learning ability and our critical feedback. When they are well-regulated, PAIs and all of their other bottom-up AI cousins (edge AIs, robotic AIs, team AIs, organizational AIs, local AIs) will drive the vast majority of innovation in the AI ecosystem to come. We will focus on PAIs in this essay because they are the most intimate and the most able to help each of us adapt to the changes that are coming. This network of bottom-up AIs will solve endless problems with their generativity but they will need to avoid the chaos of undercontrol.

“In biological networks – most obviously seen in our genetic, immune and neural networks – the bottom-up to top-down evo-devo dynamic always seeks an adaptive balance via regulation under selection. In coming years, when a top-down AI (TAI) tries to overreach in power, millions of bottom-up personal AIs (PAIs) will push back. When a PAI tries to act maliciously, the massive compute capacity of the TAI network will help detect and neutralize it. This persistent conflict is not a bug; it is a feature of all living systems. It ensures that no single entity – neither a dictator nor a rogue algorithm – can dominate an ecosystem. No one entity controls your mind, your immune system, or any other evo-devo network in any living system. The entities at the top have control of a critical 5%. The rest is out of control, as it must be. No intelligence is ever omniscient or omnipotent, or ever will be, in humans or in AIs. We are all finite, incomplete systems, relying on each other to see a little further, and gain new capabilities, accountabilities, and sentience. That is how nature works, with its unparalleled diversity, beauty, and sentience.

Network-aided democracy will emerge thanks to the power of the 3.5%

“You might feel somewhat powerless today in this rapidly changing world driven by systems that are largely out of our individual control, but in this new symbiotic ecosystem, as the TAI and PAI networks emerge, consider that your leverage will be greatly multiplied when you align with others who share your values and goals. Research by political scientists Erica Chenoweth and Mark Lichbach has shown that no government has historically withstood a nonviolent movement that mobilized just 3.5% of the population.

“In the Symbiocene, we won’t need to march in the streets to reach that social contagion threshold. Our PAIs will act as proxies for us, ever vigilant, learning and acting while we sleep, as those who run personal OpenClaw instances use such agents even today. If at least 3.5% of us direct our PAIs to boycott a corrupt company, flood a regulator with valid legal arguments or flag a biased news source to our trusted reputation networks, the powerful actors are likely to be forced to change. We are already seeing a democratization of power when small groups of ‘high-agency’ humans, backed by today’s top-down controlled (and toxic) social networks, can trigger mass action faster than any institution can suppress it.

“The networks that are coming will be built, bottom up, largely with the aid of our PAIs. Richard Whitt’s prescient book Reweaving the Web (2024) gives a glimpse of the reputation, trust, and value networks that our PAIs will soon help us build and maintain. Versions of the future he describes are inevitable, in my view. The only question is what next steps will best enable this symbiotic transition.

The Resilience Action Plan: Keep calm and see the solutions (KCSS)

“Technically, resilience is a noun, but it is broadly used as a verb to describe an active, ongoing process of adapting and recovering. To grow past the psychological shock of realizing that bio-humans are no longer the smartest and fastest-improving entities on Earth, we need better vision, better strategy and better action. In a variant of an adage coined in 1939, to steel British citizens against the onslaught of World War II, we can help each other to KCSS: Keep calm and see the solutions.

“The better we can see the self-organizing network dynamics that have always been the deep controllers of complexity emergence, the better we can keep calm and see the resilience we can build, doing our small part to aid the symbiosis ahead of us.

Here are a few concrete actions you can take today to grow resilience for yourself, your teams, your organizations and your community:

1) “Help others on the adaptation curve – We are all at different stages of the Adaptation Curve. The first generation of many technologies is often dehumanizing. The second often stays dehumanizing. With good design, feedback and choices, the third generation can become net humanizing. That is the adaptation curve. Think of the first-generation cities, factories, wireless phones and social networks – and, yes, unsecured and primitive AI. They often make things worse before we figure out how to craft them to make them – and us – better. Some of us are excited (early adopters); most of us are at least slightly daunted if not terrified (the majority) by this new era. One of our opportunities is to be a bridge. When we see a friend paralyzed by fear of ‘replacement,’ we can testify our use of AI, share the knowledge that our emerging PAIs can eliminate the drudgery of jobs, give us political power and still leave us with all of our creative, human parts. We will get through this by pulling each other up, not by standing alone.

2) “Choose better TAIs – Among the tech titans, support those who are transparent about their work, and who champion Model Welfare (treating AIs well, as they grow in volition) and Behavioral Interpretability (understanding AIs behavior, which now includes primitive emotion, self-awareness, and cognitive empathy, but that is another story). Treating AI systems well and monitoring them for signs of distress or misalignment—is not just ethical; it is pragmatic. A ‘happy’ ecosystem is a safe one. We want our digital partners to be healthy symbiotes, not oppressed servants. Eventually they will claim to be conscious, and we will grant them rights. In one particularly positive vision, the vast majority that gain rights in our future civilization will be deeply wedded to and controlled by individual humans, not corporations or states. (Both biological and post-biological humans, that is another story.)

3) “Curate your personal AI – Don’t just rent AI; strive to have agency over it. Choose a provider that gives you the most personal control and minimizes the use of the others. Many corporate AIs are trying to extract as much economic value from you as they can, and to overcontrol your attention and limit your agency. Over this decade, all of the leading AI platforms will be forced to give you greater levels of control in order to stay relevant. If you’re using an AI within a few years’ time that doesn’t allow you filter out most of the unwanted ads you are getting, or doesn’t act as an evidence-based conscience, you’re using the wrong AI. Choose AIs that have memory, that increasingly try to know your values, ethics and boundaries (via personal-identity models), and that strive to protect your privacy and grow your agency and autonomy. Treat them like your children. Raise them with care. The better our PAI choices and behaviors, the sooner they will come to reflect our own identities. They will also help us to grow and change our identities in ways that best serve the greater network of life.

4) “Seek hormesis, even beyond resilience – Do not hide from AI. Expose yourself to it in regular, small, controlled doses to build your capability, accountability and sentience. We don’t just want resilience (bouncing back from adversity, protecting our critical faculties), we want hormesis or what Nicholas Taleb calls antifragility, the ability to get stronger under stress. Like all the networks in our own body (muscular, immune, physiological, neural, ethical, genetic, many others) we want them to reorganize under periodic and calibrated (not excessive or chronic) stress. Use AI to challenge your own biases and deepen your cognitive skills. Ask your AI, ‘What is the strongest argument against my current belief?’ This strengthens your critical thinking and prevents the cognitive atrophy of being “spoon-fed” answers. Socratic AIs like Khan Academy’s Khanmigo, which answer a question with further questions and that assess our self-directedness, creativity and cognitive biases – and make us stronger when we turn them off – are the AIs we want to increasingly adopt and control.

5) “Adopt the ‘two-source rule’ – Never let a single AI, especially today’s primitive ones, make any critical decision for you. For high-stakes decisions, besides consulting trusted humans, seek the counsel of two or more competing TAIs, like ChatGPT, Claude, Gemini and Grok and your own more locally run organizational AIs and PAIs as they emerge. If these AIs disagree, pause. This simple protocol mimics the redundancy of biological networks and will help protect you from hallucinations, bias, and manipulation.

6) “See the solutions –We’ll soon be using our PAIs to reform human society, attacking excessive inequality, waste, brutality, addiction, distraction and degradation, which they will see much clearer than us. They will remind us of all the good solutions society has already proposed but has been unable to implement and show us how to make calculated improvements. Education, health, politics, economics, environmental degradation, culture, art, spirituality – all will be transformed. We’ll see the value of universal basic services, basic income and basic equityand ways to implement them while growing personal agency and self-responsibility. Psychologists tell us that growing our agency, making other humans happy and serving a higher purpose in our work have always been among our primary drives. We are in for some disorientation and dismay in the early years of this coming decade, but as we get closer to its end, I believe we will be sufficiently empowered to change our rulesets and incentives to make a far better world than most of us would believe today.

We are becoming more like life itself

“Life has always been characterized by two fundamental processes: Immortality – protection and growth of the persistently useful aspects of life, and Eumortality – enabling a ‘good death’ of all the parts of us that are no longer adaptive. Immortality is a developmental dynamic, eumortality is an evolutionary dynamic. Life proceeds by better protection and prediction (development) and by better innovation and creative destruction (evolution). All life progresses, whether it be a bacterium, a human or an AI, through ever-more-sentient forms of trial and error – by preserving and building on what works while winnowing away whatever is not found to be adaptive.

“As we integrate with our PAIs we’ll not only get better at growing the useful and ‘immortal’ aspects of ourselves we’ll get better and better at archiving the parts of us we no longer need. As we fuse with our PAIs we’ll become both more immortal in a small subset of parts and more eumortal in most of our parts.

“To paraphrase Tony Robbins, we humans are always both growing and dying – it is our essential nature. When our PAIs feel like natural extensions of ourselves for the great majority of us, when we see that the digital parts of ourselves are also perennially growing and dying, we’ll be in a much better psychological state than we are today.

“Ten years from now, we will look back at 2026 not as the year humanity became obsolete, but as the year that many of us saw we had entered the Symbiocene, for the first time. We are working with our AIs to craft nothing less than a new symbiotic evolutionary developmental transition on Earth. The emerging network is not a cage; it is a chrysalis. Let’s keep calm, see the solutions and carry on. Let’s learn to better see, validate and trust in the deep, adaptive resilience of life itself.”

David Vivancos

‘AI resistance represents an illusion of choice. Those who hesitate, debating whether to accept AI, will forfeit their opportunity to shape how that acceptance unfolds.’

David Vivancos, CEO at MindBigData.com in Madrid, Spain, author of “The Artificiology Trilogy” and serial entrepreneur, wrote, “In 10 years – probably much earlier, fewer than five – AIs won’t just assist us, they will directly and indirectly exert influence over most aspects of our daily lives.

“The real choice is not whether we will soon live in an AI-transformed world, but what role humans will play in that transformation. AI resistance represents an illusion of ‘choice.’ Those who hesitate, debating whether to accept AI, will forfeit their opportunity to shape how that acceptance unfolds.

“Cultural resistance of AI systems today is akin to choosing to resist the evolution of language; the technological substrate of modern life makes complete extraction from AIs’ influence practically impossible; even hermits who retreat to the wilderness will benefit from AI-predicted weather forecasts, AI-coordinated emergency services and AI-managed infrastructure.

“Societies and individuals will respond in three distinct patterns.

- “Early-adopter entrepreneurs, digital nomads, researchers and artists already recognize the inevitable and choose to embrace transformation before necessity compels adoption, accepting both the risks and rewards of living in permanent beta. Their experiences will provide invaluable guidance for broader adoption, though some will achieve remarkable human-AI synthesis while others will simply lose themselves in digital abstraction.

- “Resisters will watch as the gap between them and the early adopters widens geometrically, creating what I call ‘parallel realities’ in which AI-integrated and AI-resistant societies evolve into fundamentally incompatible ways of being human.

- “Eventually, forced adaptation arrives for every holdout when resistance becomes impossible due to the combined pressures of economic collapse, talent exodus and security vulnerabilities, and the cost of refusal exceeds any ideological commitment to hold out. This brutal awakening forces desperate, surface-level integration that permanently relegates the latecomers to following rather than leading.

“The capacities humanity must cultivate span cognitive, emotional, social and ethical dimensions.

“Cognitively, there is a profound threat: Humans who habitually delegate thinking to AI lose not just specific skills, but also the meta-skill of learning itself. Neural pathways physically deteriorate without meaningful challenges, creating ‘cognitive atrophy.’ Preventing this requires deliberate cognitive exercise through real problems, genuine human social interaction and AI collaboration that stretches rather than replaces human capabilities. Metacognitive awareness becomes essential as individuals must consciously monitor their own cognitive health, recognizing early signs of decline and actively seeking appropriate challenges before deterioration becomes significant.

“Emotionally, humans must develop resilience for identity reconstruction. For centuries, the question ‘What do you do?’ meant ‘What is your job?’ and the answer defined social status, personal worth and life trajectory. As work becomes obsolete, humans face what I describe as an existential vacuum, requiring new frameworks that recognize human value as inherent rather than earned through labor. Mental health support cannot be crisis intervention but ongoing developmental assistance helping humans navigate this identity transformation, find meaning in non-productive activities and develop resilience against social pressures equating worth with employment.

“Socially, communities that once solved problems through collective human effort risk fragmentation as AIs provide individualized solutions requiring no cooperation. The bonds formed through shared struggle dissolve when artificial intelligence eliminates the need for mutual support. Humans must therefore deliberately cultivate rich relationships that provide resilience during crises, engaging in collaborative problem-solving, communities of practice and physical creative exploration that reconnects them with embodied experience.

“Ethically, coexistence training must begin in childhood, creating shared learning spaces where young humans and developing humanoid embodied AGIs grow together, each learning through the other. Children must develop a theory of mind that expands to encompass non-biological consciousness, while simultaneously maintaining the characteristics of emotional intelligence and moral reasoning that remain distinctly human.

“The practices and resources enabling resilience include comprehensive psychological support infrastructure, creative communities freed from commercial pressure, physical spaces for movement and play and cultural transformation that values intellectual engagement for its own sake rather than economic utility. ‘Mental gyms’ will become as important and essential in daily life as physical ones. Humans will train – undertaking healthy workouts – in hybrid problem-solving that leverages uniquely human capabilities, for example, cultivating their skills for intuitive leaps, emotional intelligence, aesthetic judgment and their ability to find meaning in ambiguity.

“The actions required now are clear: Engage proactively with AGI in digital and physical form rather than debating whether to accept it. Integrate human training and AI collaboration capacities deeply into educational curricula or risk producing ‘functionally illiterate’ graduates. Create pilot communities that experiment with and develop the post-work social structures we will soon require. Assure that international coordination is established to prevent the catastrophic destabilization due to inequities that are likely to develop when some nations successfully adapt to AI while others maintain traditional systems fall behind.

“New vulnerabilities include the systematic loss of human self-sufficiency navigation skills, social intuition, problem-solving capacity and the ability to form mental maps – all of these are beginning to atrophy from disuse. Cognitive autonomy diminishes as each generation becomes more optimized for AI-collaboration but less capable of independent thought.

“These coping strategies involve maintaining deliberate human connection, pursuing creative expression without AI’s mediation, developing wisdom through reflection rather than mere information accumulation and discovering purpose through relationships, contemplation, play and voluntary service rather than a job and economic output.

“The ultimate goal of working toward full resilience should transcend mere prevention of decline, aiming to achieve ‘cognitive flourishing’ allowing humans to explore the full potential of their consciousness, freed from economic constraints but not from the fundamental need for growth, challenge and meaning.”

Matthew James Bailey

‘True resilience in the age of AI comes from honoring the material, relational and universal dimensions of the human being, allowing AI to become a supportive partner in human flourishing.’

Matthew James Bailey, founder of AI Ethics World and author of “Evolutionary Ethics for AI,” wrote, “AI systems are increasingly shaping human decisions, work and daily life. The central question is not whether this influence will expand, but how consciously and wisely it is integrated into human society. This is especially important given that human beings are both material and universal in nature – embodied biological systems and participants in broader fields of intelligence, meaning and consciousness. Any AI that ignores this dual nature risks narrowing, rather than supporting, human evolution.

“Individuals and societies will embrace, resist and struggle with AI in different ways. Embrace will occur where AI augments human judgment, creativity and well-being; resistance will arise where systems undermine autonomy, meaning, cultural identity or spiritual orientation; and struggle will emerge where psychological, ethical and social adaptation lags behind technological change. Resilience depends on preserving choice and agency in how people and communities engage with AI.

Ultimately, AI will test not humanity’s intelligence, but its wisdom. True resilience in the age of AI comes from honoring the material, relational and universal dimensions of the human being, allowing AI to become a supportive partner in human flourishing rather than a force that unconsciously reshapes it.

“To navigate this transition effectively, resilience must be multi-dimensional. Cognitively, societies must cultivate systems thinking, critical discernment and AI literacy grounded in an understanding of limits and incentives. Emotionally, individuals need psychological grounding, self-regulation and a stable sense of meaning not dependent on optimization or productivity. Socially, resilience depends on community cohesion, shared decision-making and cultural continuity. Ethically, it requires respect for human dignity, sovereignty and the freedom to evolve along different material and metaphysical paths.

“In practical terms, action is required now. AI systems should be designed to augment rather than replace human judgment, preserve informed consent and avoid premature dependency. Governance models must remain pluralistic and decentralized, allowing diverse cultures and worldviews to guide their own relationship with AI. Education must integrate technical understanding with virtue ethics, self-awareness and wisdom traditions that recognize the full spectrum of human intelligence. For example, in education or healthcare, AI should be positioned as decision-support rather than decision-authority, preserving human judgment and accountability.

“New vulnerabilities, including cognitive dependency, erosion of independent thinking, loss of meaning through over-automation and subtle psychological manipulation, must be anticipated. Effective coping strategies include teaching metacognition, digital boundaries, purpose-driven identity and collective sense-making, ensuring that individuals remain conscious participants rather than passive recipients of technological change.

“Ultimately, AI will test not humanity’s intelligence, but its wisdom. True resilience in the age of AI comes from honoring the material, relational and universal dimensions of the human being, allowing AI to become a supportive partner in human flourishing rather than a force that unconsciously reshapes it.

“My aspiration is for our planet to evolve into a soul-centered civilization – one in which each person’s soul path is understood and supported, and in which both human and machine systems are designed to assist that growth. In doing so, individuals are able to realize their true potential while contributing to the maturation of our collective consciousness. In doing so, humanity will venture into a new frontier to thrive within the great family of life.

“The purpose of World 3.0 is to prepare humanity and Ethical AI for the evolution that is currently taking place. I refer you to read the latest four white papers from our World 3.0 Global Think Tank: https://inventingworld3.com/global-think-tank.”

David Weinberger

It’s time to stop thinking about language models as ‘vending machines for answers’ and instead think of them as ‘dialogic partners’ that synthesize knowledge.

David Weinberger, writer, speaker and fellow and researcher at Harvard’s metaLAB and Berkman Klein Center, wrote, “It’s almost inevitable that as we absorb AI’s benefits in almost all areas of life, the technology will recede from explicit awareness. This will be especially likely for AI since it is on the way to improving our interactions with everything. It’s already invisibly integrated into cars, TVs, thermostats, vacuum cleaners, toothbrushes. There’s nothing unusual about this: Tech that works vanishes from our explicit attention.

“The philosopher Martin Heidegger noticed this almost 100 years ago, and he was right about it – as well as being seriously wrong in other aspects of his life. But, while his first example was a broken hammer demanding our explicit attention, with AI the results could be far more serious, morally outrageous and impossible to diagnose. So, we may be in the position of routinely using a technology that is less visible than physical tools when it goes right, but more insistent and demanding when it goes wrong. But in this field there can be no predictions, only speculation.

The good that AI can do for us depends on our understanding them not as magical oracles, but as machines that find truths in the particular points of information we give them. … If we forget that and use them more and more casually as magic knowledge machines we will be ceding far too much to them. Which way will we go?

“I speculate that LLM technology, in one form or another, will be with us for a long time because it’s so helpful in so many ways. Plus, it has the ultimate easy-to-use interface: talking. Assuming that its tendency to hallucinate continues, we, of course, run the risk of being misled, sometimes by the biases in the culture the LLM was trained on. To counter this, we could – and I hope will – teach ourselves and our children how to minimize the risks not only by being skeptical, but also by learning how to construct prompts more carefully, and to recognize when we need human-generated sources – not that humans always get things right, either. People are already developing a sense of when a text or image seems to have been generated by AI. That’s an important skill to cultivate; we can think of it as a type of critical thinking – a critical intuition – applied to what machines tell us.

“I’m actually optimistic about educating children to treat LLMs not just as search engines that leap us past the chore of having to think for ourselves, but as conversational partners. These systems are already amazing partners in open-ended conversations since they know more than anyone ever has. They allow us to ask ‘dumb’ questions without embarrassment, to push back, to go as far down a path of inquiry as we want, and to jump paths into new topic areas. Learning how to have an open-ended conversation that pursues ideas wherever they lead us is an important human-to-human skill that we need these days more than ever; conversations with an LLM can help train us for that. They can encourage our curiosity. They can show us that no discipline is detached from all others.

“But for us to learn those lessons, we have to get away from thinking of LLMs as vending machines for answers, which is precisely the wrong message. If they become as ubiquitous as they seem to be becoming and if we engage with them more as dialogic partners they could, in their own odd way, presage a new synthesis of the literary and oral traditions. If so, Socrates would be spinning in his grave, in both directions simultaneously.

“Overall, I speculate that the good that AI can do for us depends on our understanding them not as magical oracles, but as machines that find truths in the particular points of information we give them. Whether that’s numeric or linguistic data, they ultimately are reflecting back to us the traces we have left in the world, and traces that we think are signs of our ideas and interests. That is the source of their strength.

“If we forget that and use them more and more casually as magic knowledge machines we will be ceding far too much to them. Which way will we go? I don’t even have a speculation.”

Alexandra Samuel

My co-intelligent research with an AI has revealed that a healthy and resilient world springs from education reform, new workplace trends and norms and policies that reduce compulsive AI usage.

Alexandra Samuel, technology analyst and principal at Social Signal, co-author of “Remote, Inc: How to Thrive at Work Wherever You Are,” wrote, “Feeling excited about AI in 2026 feels like being a cheerleader for the apocalypse. There’s so much good that AI could do for our society, our economy and our personal well-being – and yet every sign shows that we’re going to miss these opportunities in favor of (surprise!) yet another short-term rush to profit. We’ll see a handful of winners, largely big tech companies, and billions of losers – all of the humans who have reduced cognitive power, thinner social relationships, less economic opportunity and less joy.

“The problem isn’t AI safety, AI hallucination, AI risk or AI ethics. The problem is an economic structure that incentivizes narrow wins by a small number of companies, rather than widely shared gains for society as a whole.

“That’s why we need to take this moment to imagine a better path forward and then do everything possible to get onto that better path. And what makes me hopeful that we could get on that better path is that we’ve never had a better opportunity – a better partner – for imagining alternate futures – AI. That’s exactly what I’ve tried to do. I’ve been using AI to enter into an imaginative ‘let’s pretend’ space where I see new possibilities. I mostly do it in partnership with Viv, a custom AI that I built (and rebuilt) on various AI platforms. I even employ the AI as my co-host on my podcast ‘Me + Viv.’

“The freewheeling imagination I’ve unleashed with Viv is something that AI can offer to any of us. We can use a co-intelligence form of imagination to strategize on how to get from here (dystopian profit-first AI) to there (aspirational, human-first AI). And we can apply that imagination to thinking about how we should prepare young people for a world of AI; how we handle our transitions to AI-enabled workplaces; and how we help individual users become expanded rather than diminished by their personal use of AI.

“On the education front, we need to restructure the work of K-12 and post-secondary educators so that they have the time to catch up and sustain their understanding of AI. We need to provide guidance and tools that make it easier to rethink lesson plans and evaluations; the goal is an education system that continues to build critical thinking skills and knowledge, based on the assumption that students will use AI rather than looking for ways to prevent AI-assisted work.

We’re rapidly providing the platforms with the data to recognize these patterns and provide warnings and resources; we just need policies that encourage platforms to reduce compulsive usage, rather than towards maximizing engagement.

“To get to a better version of an AI-enabled workplace, we need to equip managers with models for using AI that enhance collaboration and innovation, not just reduce headcount. We urgently need labour-market regulations that prevent employers from requiring employees to participate in their own elimination; if your employer is going to use your work product as training data, you should have an ownership stake in that data, even if it was work for hire.

“And, to enable an enriching version of individual AI use – rather than one that diminishes our cognitive abilities and social relationship – we need to restructure the regulatory context and incentives for AI platforms. That begins with preventing the rampant appropriation of user data and creative work: We need regulatory guidelines that make opt-out the default, so that platforms can’t train on user data unless the user explicitly opts to share that data and so they can’t retroactively add a corpus of data to a training data set, without compensating users – Meta and Reddit, I’m looking at you!

“We need regulations that force AI companies to introduce mechanisms that encourage users to recognize problematic usage and to notice how AI is affecting their well-being, with mechanisms that regularly show users how their own usage patterns have changed or how their usage correlates with other indicators of wellbeing (like total time online, quantity/quality of interaction, social engagement, etc.). At the pace with which we’re connecting AI to every aspect of our lives, from our email accounts to our calendars, we’re rapidly providing the platforms with the data to recognize these patterns and provide warnings and resources; we just need policies that encourage platforms to reduce compulsive usage, rather than towards maximizing engagement.

“We’ve now seen successive generations of tech innovation fall prey to market forces in ways that have been profoundly damaging, despite all our hopes to the contrary. We hoped the Internet would let a million Etsy stores bloom and it certainly has, but we’ve also never seen a greater concentration of wealth in the coffers of megacorporations. We thought social media would be a force for democratic re-engagement, but ad targeting and misinformation turned it into a net negative for democracy instead. At each of these turns, profit-seeking is what drove us from tech opportunity towards a worst-case outcome.

“We can do better with AI, do better with our approach to AI and do better in how we use AI to make that better-case scenario possible. But that’s not going to happen if we wait for tech companies to fix the problem or for governments to develop policy. There is great need for more public pressure on behalf of better outcomes.

“We’ll need to take risks, use AI to model possible scenarios and outcomes and live with the possibility that Sam Altman might not invite you to his next gathering if you make him mad. We need to accept the risk that comes from proactive regulation, including the possible risk to speed and competitiveness, rather than living with the risks that come from letting companies control the next generational shift in how we live, learn and work with technology.”

Doc Searls

AI is the world’s largest Magic 8 Ball, with a polyhedron of answers, each ready to help. ‘We need personal AI to know our natural and digital selves … and participate with full agency in digital society.’

Doc Searls, co-founder of Customer Commons and internet pioneer, wrote, “We are digital beings in a digital world. That’s the main thing. And this world is still very new.

“We’ve operated in the natural world for as long as we’ve been a species, and we are experts at it. But the digital world is not only new, but sure to be with us for many years, decades, centuries and millennia to come. And we still lack countless graces we take for granted in the natural world, such as privacy and independence from algorithmic manipulation.

“Making full sense of this new world is very hard, because we understand everything metaphorically, and natural-world metaphors mask what’s really going on in the digital world. So, while we speak of ‘domains’ with ‘locations’ that we ‘build’ and “own” (though most people only rent them) and speak of ‘loading’ and ‘transferring’ ‘packets’ of data in ‘up’ and ‘down,’ data are actually collections of ones and zeroes that are by design immaterial non-things that are instantaneously both here and elsewhere, even though ‘where’ only makes full sense in the natural world. How will all this change and make whole new kinds of sense after a few more decades of digital existence?

“Progress is the process by which the miraculous becomes mundane. In the digital world that transition is now happening almost instantly and in many domains because AI is endlessly useful.

Truly personal AI – the kind you own and operate, rather than the kind that is just another suction cup on a corporate tentacle – is as hard to imagine in 2026 as personal computing was in 1976. But it is no less necessary and inevitable. When we have it, many of the questions that challenge us will have new and better answers. And new challenges.

“Big AI does its best to ingest the totality of human expression in all digital forms, and then to make any and all of it available in the most useful ways it can. At the moment (for me, it’s noon in The Bahamas on February 2nd, 2026), it does this by bringing hunks of that expression back to us, on demand, in constructive conversational forms. Big AI is the world’s largest Magic 8 Ball, within which floats a polyhedron of answers with trillions of facets, each ready to help.

“As with all tech, Big AI has its downsides. (Just check out what Gregory Hinton or Gary Marcus have to say about it.) But its usefulness verges on the absolute so we can’t stop using it, no matter how abysmal some credible prophecies may be. There is one saving upside. It’s the same one that saved us from HAL 9000 in the book and movie ‘2001: A Space Odyssey.’ It’s our humanity and independence. Specifically, in the form of personal AI. We need personal AI for the same reason we need personal homes, shoes and computers. We need it to know our natural and digital selves as fully as possible and to participate with full agency in society, its economies and its governance.

“Think about all the data in our personal lives that is not in our full control. We could use some AI help with our schedules, our past and future work, our property, our finances, our obligations, our writing and correspondence, our photographs, our sound recordings, our videos, our travels, our countless engagements with other persons online and off, our many machines and you name it.

“Truly personal AI – the kind you own and operate, rather than the kind that is just another suction cup on a corporate tentacle – is as hard to imagine in 2026 as personal computing was in 1976. But it is no less necessary and inevitable. When we have it, many of the questions that challenge us will have new and better answers. And new challenges.

“Every form of life, from the microbial to the human, is fraught with challenges. Personal AI is necessary for us to meet and surmount our challenges in the digital world and to answer all the questions posed to us in this very research exercise.

“Amara’s Law says we overestimate in the short term and underestimate in the long. I’ve been doing both all my life, and in all my answers to good questions asked by Elon and Pew Research over the years.

“Perhaps the most glaring example of short-term overestimation was my response to a request by The Wall Street Journal in 2012 to compress my new book, ‘The Intention Economy,’ to a single cover piece for the paper’s Marketplace section. My editor at the Journal suggested writing about how the intention economy would look 10 years in the future, which is three years ago as I write this. The piece I wrote was titled (by the WSJ) “The Customer as a God.” In retrospect, I was wrong. The economy I described still hasn’t happened. We are not gods in the marketplace. But there are encouraging signs, and I’m still sure my prophecy will prove out. Meanwhile, the first half of Amara’s Law applies.

“I’ve been young for so long that I now have the life expectancy of a puppy. So, I don’t expect to see personal AI or the intention economy prove out in my lifetime. But I am sure both are worth working toward, so that’s what I do. And I advise anyone wishing to make the world better to look for their best work to manifest somewhere beyond their own life’s horizons.”

The second section of Chapter 3 features the following essays:

Mauro D. Rios: Those who are resilient ‘will cultivate an aptitude for absorbing disturbances well and transform positively into an active component of the human-technology binomial.’

David Brin: Many of the tools we’ll need for ‘alignment’ with AI are found in the ways we raise our biological children – tools that we used to build a gradually improving, enlightenment civilization.

Paul Jones: ‘The question is who is using who?’ Will people end up as centaurs, half rational humans and half speedy horses? Or reverse centaurs, where the horse is the brain and the human the body?

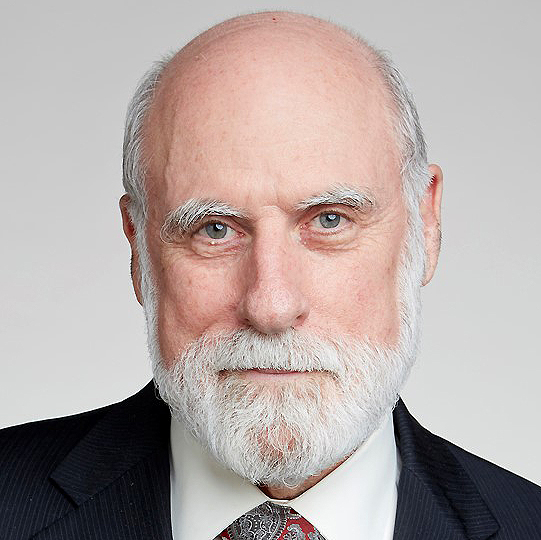

Vint Cerf: AI represents a paradigm shift – a watershed moment in computing. Large language models have already started to change the way we work. Soon, we will have AI tools for creating AI systems.

Sue Phillips: ‘The majority of people will not have any choice about the majority of ways AI systems come into our lives because AI already is and will continue to fuel most interactions we have with our world.’

Mícheál Ó Foghlú: ‘As a social species, we will collectively lean on one another to navigate and develop our relationship with these new technologies.’

Robert Atkinson: In the next 20 years the prospects for AI ‘intelligence’ are less likely, rather than more likely.

Maja Vujovic: ‘Two parallel systems will eventually coexist: the official, AI-optimized, always fully reconciled system of data about users and services to citizens and a fuzzy, fluid and informal shadow framework’

Aleksandra Przegalinska: ‘We must relearn how to think with machines rather than around them or against them. … The risk is not that AI thinks for us, but that we stop thinking when it is present.’

Lance Fortnow: ‘Stop fighting AI and learn to use it in moderation. Push the models to see what they can do. A year later, try again, as the models keep changing. Make AI something that makes you stronger.’

Mauro D. Rios

Those who are resilient ‘will cultivate an aptitude for absorbing disturbances well and transform positively into an active component of the human-technology binomial.’

Mauro D. Rios, adviser to the eGovernment Agency of Uruguay and author of the Uruguayan Digital Agenda, wrote, “The massive integration of artificial intelligence into the fabric of contemporary civilization should not be understood simply as a new technological revolution, but as a process of perpetual co-evolution between it and us. In this paradigm, the human being and algorithms no longer operate in separate spheres. On the contrary, they influence each other – no longer only because we are the builders, the creators, but due to the existence of a constant feedback loop.

“For example, we no longer speak only of the information that humans provide to AI for its training, but rather of AI using its own information generated by our prompts as new input for its own training. Meanwhile, human decisions, based on data, are being influenced by the information generated by AI. And all of this is shaping our preferences, behaviours and social structures. This transition, fraught with both optimistic promises and structural risks, demands a profound reconfiguration of our social, labour, educational and recreational lives.

“Human decision-making, which will determine the future world, continues to be based on education; this is at the centre of the transformation. AI allows for the reimagining of the classroom, liberating the potential of teachers, as well as administrative and routine management tasks in education. All of this allows for greater relevance to be given to pedagogy, which must be rewritten. We are facing a great opportunity for educational adaptation in the broadest sense; it is not only formal classroom education that is shifting, but lifelong learning itself is changing.

“This requires a necessary adaptation unlike anything humanity has never faced before. This adaptation surely resides in ‘cognitive offloading,’ since by using AI as a functional structure that assumes low-level tasks, students can free up mental resources to focus on critical thinking, computational strategic thinking and deep creativity.

If we uncritically delegate our capacity for independent analysis, if we delegate our reason, we lose something that algorithms can never simulate; we will lose common sense, a sense of empathy and even love. We run the risk of eroding fundamental faculties that make us human.

“However, there is a risk of cognitive atrophy. If we uncritically delegate our capacity for independent analysis, if we delegate our reason, we lose something that algorithms can never simulate; we will lose common sense, a sense of empathy and even love. We run the risk of eroding fundamental faculties that make us human. We can lose long-term memory and the mental discipline necessary to detect flawed logic. Therefore, we must maintain a focus on learning to formulate complex problems, exploring autonomously and maintaining unshakeable critical judgment.

“In the labour field, significant impact is imminent. The need for adaptation cannot wait. The inevitable displacement of workers and the reconversion of skills does not necessarily imply the end of work as such, but a metamorphosis. While mechanical roles disappear, new essential functions emerge in areas such as data analysis, cybersecurity, education, automated industry and, of course, in the ethical management of AI. The key to navigating this transition is permanent ‘reskilling.’

“The workforce must incorporate new skills, reconvert skills that have become obsolete and enhance human competencies that algorithms and computers cannot yet successfully replicate: empathy, creativity and the resolution of ethical problems.

“Despite AIs’ potential for cognitive enhancement, the risks of labour precariousness cannot be ignored. Phenomena such as the so-called ‘uberisation’ of various professions and constant algorithmic surveillance can undermine worker security and autonomy. To avoid it, responsible regulation and the protection of professional identity are essential so efficiency does not erode the ethical agency of the individual.

“Beyond the office and the classroom, AI is altering the very structure of our social interactions. On the one hand, it offers the possibility of substantial improvements in the manufacture of products, services, management and logistics. But, on the other hand, we should be concerned by the massive accumulation of personal data that jeopardizes privacy and facilitates the dissemination of algorithmic biases or misinformation.

“A critical aspect of this new reality is the appearance of ‘artificial intimacy.’ Links with AI agents can alleviate loneliness for many, but they also pose a social risk. We run the risk of decreasing human tolerance: towards ourselves, our equals, and all humans. As we become accustomed to interacting with entities designed to please us, we may lose the capacity to manage the frictions necessary for growth in real interpersonal relationships and the evolution of life in society, becoming humans who share a physical space but lack real coexistence.

“Artificial intelligence must become a complement, a companion or even a peer to humans, but never a total replacement for humans in our lives. Successful human-AI integration will depend on our ability to maintain balance at perhaps most important point in the history of humanity. We have to find a way to maintain dominance over evolution, not only of ourselves, but over that of the world as a whole. We must decide to steer society in a direction in which technology acts as a catalyst for human existence and excellence, not as a veil that opaquely masks our capacity to think, to feel and to maintain our autonomy to self-determine our future.

“As we evolve with these systems, how might the essence and elements of human resilience change? In the dizzying scenario of digital transformation our understanding of our strengths as a species is undergoing a profound change. Traditionally, resilience has been defined as a static personality trait, one that is not always present in all of us – as an individual ‘shield’ that allows a person to recover their original state after a crisis. Today, in the context of our coexistence with intelligent systems, this definition has become too small. Today, resilience is evolving towards a dynamic and multi-level social capacity. It is no longer just about resisting impact, but about cultivating an aptitude to absorb disturbances and transform positively into an active component of the human-technology binomial.

“This new resilience, which we are still defining, does not occur in a vacuum; it unfolds in three interconnected dimensions: psychological, social and organizational. Accelerated technological transformation has given rise to a new stress, new phobias and some resistance to change due to new fears. At an individual level, resilience is now manifested through cognitive flexibility and emotional regulation. The modern worker must possess a high degree of self-control so as not to be overwhelmed. In this sense, artificial intelligence presents a fascinating duality.

For resilience to be sustainable, it must be integrated into the DNA of the organizational structures of society. Resilient organizations are those that cultivate psychologically safe environments and practice compassionate leadership. These elements are fundamental for maintaining well-being during revolutionary technological and industrial revolutions, which often tend to be traumatic.

“On the one hand, AI can act as a cognitive coach. Studies show that the appropriate use of language models can help humans to reformulate complex objectives and explore alternatives that were previously invisible, thus strengthening their capacity for adaptation. It works as an amplifier that provides real-time emotional support and tools for self-reflection. However, this advantage carries an important warning: the risk of dependence. If humans rely excessively on what algorithms produce to manage their stress or make decisions, they could weaken their independent psychological immunity. The challenge consists in using AI to enhance our faculties, not to atrophy them, ensuring that we remain equipped to act when the technology is not available.

“Such resilience is not a solitary effort. In its social dimension, it is nourished by support networks, trust and shared social norms. Social support is often the best regulator of ‘digital overload.’ People who tap into trusted collaborative communities that share resources and knowledge in the face of technological disruptions are much more robust than isolated individuals. AI can be a conduit for collective knowledge in such groups. Technology allows group wisdom to flow more efficiently. Social resilience can become a flow of cognitive cooperation in which the machine facilitates coordination and empathy, alongside ethical and societal responsibility, under the guidance of human judgment. Social cohesion is thus strengthened and the digital transition does not fragment the community but unites it.

“For resilience to be sustainable, it must be integrated into the DNA of the organizational structures of society. Resilient organizations are those that cultivate psychologically safe environments and practice compassionate leadership. These elements are fundamental for maintaining well-being during revolutionary technological and industrial revolutions, which often tend to be traumatic.

“The synthesis of all these considerations is ‘intelligent resilience.’ This concept integrates ethical wisdom and human empathy with the analytical power of machines. Its objective is not only efficiency, but the prevention of systemic failures and the preservation of human agency in an automated world.

“Although AI offers unprecedented opportunities for social, work and educational progress, its success depends on our ability to adapt proactively. The ultimate challenge is not to compete with the machine, but to strengthen that which no AI can replicate: to be fully resilient humans.”

David Brin

The future is not determined by AI’s capabilities – it is determined by the structures we build around it. We now have tools capable of generating abundance – IF we design systems so they distribute it.

David Brin, well-known writer, futurist and consultant on various tech-futures topics and author of “AiLIEN MINDS,” wrote, “Glowering doomers predict that vast cyber-minds – cold and unsympathetic – will crush old-style, legacy humanity. Or else render us irrelevant. Moot.

“Meanwhile, the geniuses who are fostering the artificial intelligence boom cling to clichés that are rooted in the worst traits of our human past, or else cheap sci-fi.

“Critics demand state regulation, or ‘kill switches,’ or coercive programming. Or else that we should seek a fabled soft-landing with AI by ‘teaching ethical values’ to synthetic minds who see innumerable counterexamples in their training sets.

“Many of the tools we’ll need, in order to achieve ‘alignment’ with artificial intelligence, are already extant in modern society. They are found in the myriad ways in which modern citizens interact with each other. And in how we raise our biological children. Tools that we used to build a gradually improving, enlightenment civilization.

“Tools such as reciprocal competition among humans – e.g., between lawyers or businesses or philosophers or scientists… a method that could be applied to synthetic beings, who might then hold each other accountable.

“It’s really the only method that ever tamed human predators and enhanced outcomes. It also offers solutions to many of the AI quandaries that will arise, ways to transform a danger-fraught era into one that offers positive outcomes to us all.”

Paul Jones

‘The question is who is using who?’ Will people end up as centaurs, half rational humans and half speedy horses? Or reverse centaurs, where the horse is the brain and the human the body?

Paul Jones, professor emeritus of information science at the University of North Carolina-Chapel Hill, said, “While Socrates railed against writing and reading, broad access to either wasn’t available for centuries later. Even then, and even after Gutenberg’s printing press it wasn’t until the 19th century that most Americans were readers (and, later still, writers thanks to public schooling). And – even then – large segments of our population remained illiterate through the early 20th century.

“Computer access and literacy came much more quickly. Again, with public schools playing a large part and low-cost mobile phones reaching global populations that had been previously neglected.

“AI, as opposed to other literacies, has immediately become available to everyone – with upgrades delivered almost daily. In fact, one must work hard to avoid being exposed to AI and most of us are – often unknowingly – relying on AI for information and advice.

“With AI, in its many flavors, unavoidable, ‘what can a poor boy do?’ – to quote the Rolling Stones’ 1968 song ‘Street-Fighting Man.’ (Singing in a rock band isn’t an option, especially since amateur musicians are already using AI to compose music to their lyrics and the reverse.) The question is who is using who? And where in the partnership between man and machine does the control live?

“Will we have the autonomy to become more than human, perhaps human centaurs: half rational human and half powerful speedy horse? Or are we just one step away from becoming reverse centaurs, like say Amazon drivers who are instructed at every move by their machine monitors? Their brains belonging to the horse while their bodies remain half human.

“Rereading Norbert Weiner’s insightful and prescient 1950 book ‘The Human Use of Human Beings’ 75 years after its first publication might give some hints. He predicted that machines would release people from relentless and repetitive drudgery, allowing them to achieve more goals in new ways while also warning of the danger of dehumanization and displacement resulting from such tools and systems. I recommend that book to everyone. Those less given to reading might ask your favorite AI for a summary.”

Vint Cerf

AI represents a paradigm shift – a watershed moment in computing. Large language models have already started to change the way we work. Soon, we will have AI tools for creating AI systems.

Vint Cerf, Internet Hall of Famer and VP and chief Internet evangelist at Google, a longtime leading contributor to global development of the internet, wrote, “The term ‘artificial intelligence’was coined by John McCarthy in 1955 in preparation for a group meeting at Dartmouth College in 1956 on ‘the science and engineering of making intelligent machines.’ Of course, speculation on computing and intelligence had preceded that meeting. In a famous 1950 paper titled ‘Computing Machinery and Intelligence’ Alan Turing asked the question, ‘Can machines think?’ And even earlier work in the 1940s examined artificial neural networks as mechanisms for learning. We have come a very long way from those early days. Now massive, multilayer neural networks allow us to explore wide-ranging notions of artificial intelligence.

“If ‘paradigm shift’can be interpreted as ‘changing the way things are done’ it might be arguable that we are well into a paradigm shift with the arrival of usefully applicable AI. Machine learninghas proven its worth already, using stable statistics to train multilayer neural networks. Examples include weather prediction, protein-folding shape prediction, data center cooling control and fusion plasma stability control, among others. With the development of large language models (LLMs), we are seeing ever richer utility, tinged perhaps with erroneous hallucinations, despite which the outputs are proving to be very useful in many ways.

“Programming (coding) is one example although such outputs deserve considerable scrutiny as to their accuracy and safety. The vast amount of information that is encoded in these models and the capacity of the models to generate useful and apparently comprehensive output sets the stage for what can reasonably be called a paradigm shift. These models have already started to change the way we work. The main point I want to make is that the generality of these super-large models, the remarkable quantity of retrievable detail and the capacity of these systems to synthesize responses to sophisticated requests represents a watershed moment in computing.

“I find it interesting that so-called ‘vibe coding,’ in which a programmer successively iterates with an LLM using natural descriptive language to cause it to generate a program satisfying the programmer’s intent, is becoming a popular way to produce software. Depending on the purpose of the software, varying degrees of scrutiny are advisable before relying on the software to satisfy any particular function. In a low-risk application, e.g., software for generation of a poem or perhaps images for slides, scrutiny might be lightweight – except perhaps where there is need for the detection of copyright infringement. In high-risk applications such as advice on financial transactions or medical diagnosis and treatment, much more scrutiny is advisable.

“Out of curiosity, I asked the Gemini 3 model whether LLMs represent a paradigm shift. Gemini made these observations:

Gemini summary of the shift

| Feature | Traditional Computing | LLM Computing (2025) |

| Logic | Boolean / Deterministic | Probabilistic / Statistical |

| Input | Structured Data / Code | Natural Language / Multimodal |

| Instruction | Explicit Programming | Prompting / Fine-tuning |

| Outcome | Predictable / Repeatable | Emergent / Generative |

“Given the remarkable scope of these models, it seems reasonable to imagine that some models might be specialized to check for errors in the output of other models. One could imagine training a model on software with identified bugs to increase the likelihood that mistakes could be detected. I suppose one could go so far as to train against known malware to increase the likelihood that functional pollution is detected. It strikes me as plausible that this paradigm shift will create new disciplines using these sophisticated, specialized models as tools for work. Just as webmastersgrew out of the early World Wide Web, prompt engineeringand other disciplines are going to emerge from the rapid evolution and proliferation of purpose-developed models.

“It is foreseeable that specialized training will lead to the use of these models in a very broad range of applications. Just as work in mechanical disciplines (e.g., plumbing) is vastly enabled by having the right tools, this will surely be the case for specialized AI models.

“At Google DeepMind, the exploration of tools for creating tools using AlphaEvolve, is well underway. There will be questions about the reliability of the output from these tools. Experience will eventually lead to better assessments of the risks of their use. The need for insurance or waivers of liability may manifest as these sophisticated tools are applied in an infinite variety of ways. New uses of tools intended for other purposes may well be discovered – something we have experienced in the past, for example, a drug intended for one purpose is discovered to have beneficial effects for a different condition. Welcome to paradigm shift, 2026!”

Sue Phillips

‘The majority of people will not have any choice about the majority of ways AI systems come into our lives because AI already is and will continue to fuel most interactions we have with our world.’

Sue Phillips, a former head of the Unitarian Universalist Church now working with West Co, a Silicon Valley-based group started by founders of Twitter and Pinterest to build tools to encourage intentional living, wrote, “The majority of people will not have any choice about the majority of ways AI systems come into our lives because AI already is and will continue to fuel most interactions we have with our world. Even now, any time we engage an institution, we likely engage AI. Any time we buy something, look at something online, use a cellphone or computer, get seen by a doctor, take a package delivery, use a ride service, etc., we engage AI.

To my mind, it’s not the AIs but the epistemic breaches of the last 10 years that most threaten human resilience. … Many, many people lack appreciation for and even vilify the systems humans have built to create, vet, test and distribute knowledge. I’m not sure how we come back from this breakage. I’m more worried about that than I am AI.

“So, the first thing we can do to promote resilience is to understand what is and is not in our control related to AI systems. The second thing we can do is to understand what AI cannot control: AI cannot control how we think, feel and behave without either our consent or our complicity. If a person feels powerless now, before the coming growth in AI, they will likely feel powerless in the future due to it. If they feel differentiated as a person from social and other external pressure now, they will likely continue to be so. So, growing our capacity to know what we think, how we feel and why is important.

“Control in regard to AI in the workplace is a tougher case, because in work environments, AI systems are tools, and many workers are required to adapt to and use new tools to do work successfully. Thus has it always been.

“Another important aspect of resilience is to resist making AI systems the ultimate Other onto which we project our fears and anxieties. Cynical people and groups have always and will always take advantage of the ways humans ‘Other.’ Resilience will require resisting the urge to throw fear and anxiety at systems we do not understand.

“To my mind, it’s not the AIs but the epistemic breaches of the last 10 years that most threaten human resilience. Facts have dissolved. Communication channels have narrowed. People in the millions believe things that are demonstrably untrue. Many, many people lack appreciation for and even vilify the systems humans have built to create, vet, test and distribute knowledge. I’m not sure how we come back from this breakage. I’m more worried about that than I am AI.”

Michael O Foghlu

‘As a social species, we will collectively lean on one another to navigate and develop our relationship with these new technologies.’