Many experts are concerned about how the adoption of AI systems over the next decade will affect essential human traits such as empathy, social/emotional intelligence, complex thinking, ability to act independently and sense of purpose. Some have hopes for AIs’ influence on humans’ curiosity, decision-making and creativity.

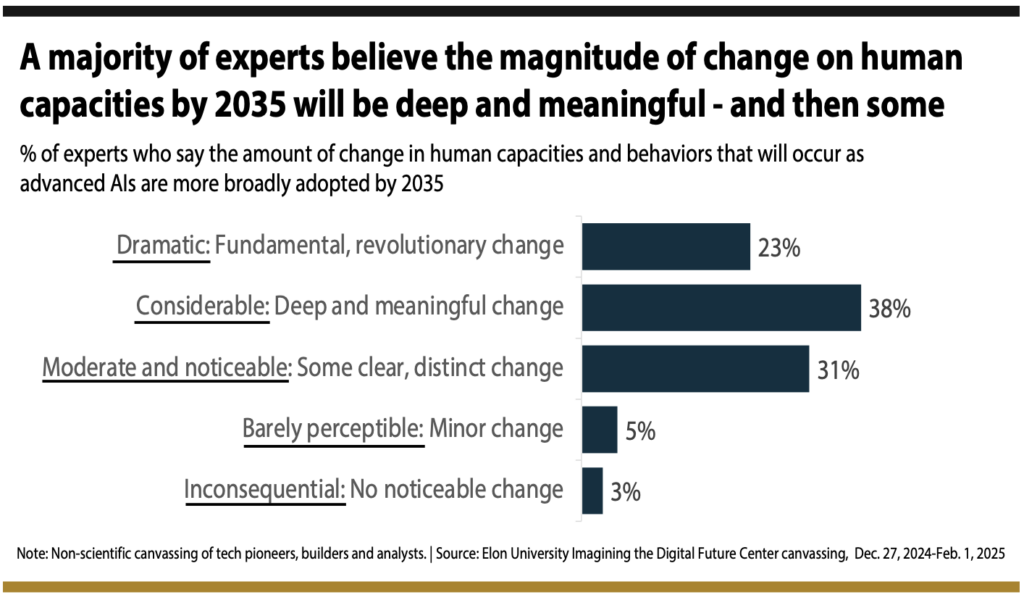

A majority of global technology experts say the likely magnitude of change in humans’ native capacities and behaviors as they adapt to artificial intelligence (AI) will be “deep and meaningful,” or even “dramatic” over the next decade. The results are based on a canvassing of a select group of experts between Dec. 27, 2024, and Feb. 1, 2025. Some 301 responded to at least one of the parts of the canvassing. Nearly 200 of the experts wrote full-length essay responses to a longer qualitative query: Over the next decade, what is likely to be the impact of AI advances on the experience of being human? How might the expanding interactions between humans and AI affect what many people view today as “core human traits and behaviors?” Their revealing insights are featured on the 228 pages of essays directly following this report’s three introductory sections. We lead off with highlights emerging from the highly revealing quantitative questions.

The 301 experts who responded to the quantitative questions were asked to predict the impact of change they expect on 12 essential traits and capabilities by 2035. They predicted that change is likely to be mostly negative in the following nine areas:

- Social and emotional intelligence

- Capacity and willingness to think deeply about complex concepts

- Trust in widely shared values and norms

- Confidence in their native abilities

- Empathy and application of moral judgment

- Mental well-being

- Sense of individual agency

- Sense of identity and purpose

- Metacognition

Pluralities said they expect that change for humans in by 2035 will be mostly positive in these areas:

- Curiosity and capacity to learn

- Decision-making and problem-solving

- Innovative thinking and creativity

Many more details, statistical graphics and expert quotes digging more deeply into these experts’ opinions on each of the 12 essential traits they were asked to weigh are found in a section following this initial briefing on overall results.

The magnitude of change by 2035:

These experts say they foresee significant change ahead in regard to these human capacities and behaviors.

They were asked, “What might be the magnitude of overall change in the next decade … in people’s native operating systems and operations – as we more broadly adapt to and use advanced AIs by 2035?”

Some 61% of these experts said the change will be deep and meaningful or fundamental and revolutionary.

They were asked how much humans’ expanding use of AI tools and systems“might change the essence of being human,the ways individuals act and do not act, what they value, how they live and how they perceive themselves and the world.”

50% of these experts said they expect the overall impact of change in being human for those adapting to AI is likely to be for the better and for the worse in fairly equal measure; 23% said it will be mostly for the worse; 16% said it will be mostly for the better.

Only 6% said they expect to see little or no change on the essence of being human by 2035.

Nearly 200 of the experts wrote full-length essays on the primary topic: Being Human in the Age of AI

An overwhelming majority of those who wrote essays focused their remarks on the potential problems they foresee. While they said the use of AI will be a boon to society in many important – and even vital – regards, most are worried about what they consider to be the fragile future of some foundational and unique traits. At the same time, a plurality of these experts’ essays are leavened by glimmers of hope that ever-adaptable humans will find ways to prevail and even flourish. These experts’ essays provide a wide range of predictions and descriptions of what life might be like a decade from now.

We suggest that you read all of the report site pages in order by continuing to read to the end of this page, where you will find a link to the next. However you can browse through nearly 200 experts’ full essays by navigating to the web pages named Part I, Part I Continued, Part II and Part III. Following is a series of representative brief excerpts from a few of the essays. (Additional brief excerpts from various experts’ essays will follow through the remainder of the introductory sections of this report.)

Nell Watson, president of EURAIO, the European Responsible Artificial Intelligence Office and an AI ethics expert with IEEE, predicted, “By 2035, the integration of AI into daily life will profoundly reshape human experience through increasingly sophisticated supernormal stimuli. … Future AI companions will offer relationships perfectly calibrated to individual psychological needs, potentially overshadowing authentic human connections that require compromise and effort. AI-driven entertainment, virtual worlds and personalized content will provide peak experiences that make unaugmented reality feel dull by comparison. There are many more likely changes that are worrisome. Virtual pets and AI human offspring may offer the emotional rewards of caregiving without the challenges of the real versions. AI romantic partners could provide idealized relationships that make human partnerships seem unnecessarily difficult. Workplace efficiencies risk reducing human agency and capability. AI platforms potentially threaten individual autonomy in financial and social spheres. … The key challenge will be managing the seductive power of AI-driven supernormal stimuli while harnessing their benefits. Without careful development and regulation, these artificial experiences could override natural human drives and relationships, fundamentally altering what it means to be human.”

Jerry Michalski, longtime speaker, writer and tech trends analyst, wrote, “Multiple boundaries are going to blur or melt over the next decade, shifting the experience of being human in disconcerting ways: the boundary between reality and fiction … the boundary between human intelligence and other intelligences … the boundary between human creations and synthetic creations … the boundary between skilled practitioners and augmented humans … the boundary between what we think we know and what everyone else knows.”

Juan Ortiz Freuler, a Ph.D. candidate at the University of Southern California and co-initiator of the non-aligned tech movement, wrote, “As we move deeper into this era, change may render the very idea of the individual, once a central category of our political and legal systems, increasingly irrelevant, and radically reshape power relations within our societies. The ongoing shift is a profound reordering of the categories that structure human life. The growing integration of predictive models into everyday life is challenging three core concepts of our social structure: identity, autonomy and responsibility. … As AI systems continue to infiltrate various sectors from healthcare to the legal system, decisions about access to services, to opportunities and even to personal freedoms are increasingly made based on data-driven predictions about our behavior, our history and our expected social interactions. These decisions are no longer based on an understanding of individuals as autonomous beings but as myriad data points analyzed, categorized and segmented according to obscure statistical models. The individual, with all the complexity of lived experience, becomes increasingly irrelevant in the face of these algorithms.”

Jerome C. Glenn, futurist and executive director and CEO of the Millennium Project, wrote, “If national licensing systems and global governing systems for the transition to Artificial General Intelligence (AGI) are effective before AGI is released on the Internet, then we will begin the self-actualization economy as we move toward the Conscious-Technology Age. If, instead, many forms of AGI are released on the Internet from the U.S., China, Japan, Russia, the UK, Canada, etc., by large corporations and small startups their interactions will give rise to the emergence of many forms of artificial superintelligence (ASI) beyond human control, understanding and awareness.”

Dave Edwards, co-founder of the Artificiality Institute wrote: “By 2035, the essential nature of human experience will be transformed … through an unprecedented integration with synthetic systems that create meaning and understanding. … The evolution of technology from computational tools to cognitive partners marks a significant shift in human-machine relations. … This transition fundamentally reshapes core human behaviors, from problem-solving to creativity, as our cognitive processes extend beyond biological boundaries to incorporate machine interpretation and understanding.”

John M. Smart, a global futurist, foresight consultant, entrepreneur and CEO of Foresight University, wrote, “I fear – for the time being – that while there will be a growing minority benefitting ever more significantly with these tools, most people will continue to give up agency, creativity, decision-making and other vital skills to these still-primitive AIs and the tools will remain too centralized and locked down with interfaces that are simply out of our personal control as citizens. … I fear we’re still walking into an adaptive valley in which things continue to get worse before they get better. Looking ahead past the next decade, I can imagine a world in which open-source personal AIs (PAIs) are trustworthy and human-centered. Many political reforms will re-empower our middle class and greatly improve rights and autonomy for all humans, whether or not they are going through life with PAIs. I would bet the vast majority of us will consider ourselves joined at the hip to our digital twins once they become useful enough. … I hope we have the courage, vision and discipline to get through this AI valley as quickly and humanely as we can.”

Richard Reisman, futurist, consultant and nonresident senior fellow at the Foundation for American Innovation, wrote, “Over the next decade we will be at a tipping point in deciding whether uses of AI as a tool for both individual and social (collective) intelligence augments humanity or de-augments it. We are now being driven in the wrong direction by the dominating power of the ‘tech-industrial complex,’ but we still have a chance to right that. Will our tools for thought and communication serve their individual users and the communities those users belong to and support, or will they serve the tool builders in extracting value from and manipulating those individual users and their communities? … If we do not change direction in the next few years, we may, by 2035, descend into a global sociotechnical dystopia that will drain human generativity and be very hard to escape. If we do make the needed changes in direction, we might well, by 2035, be well on the way to a barely imaginable future of increasingly universal enlightenment and human flourishing.”

Vint Cerf, vice president and chief Internet evangelist for Google, a pioneering co-inventor of the Internet protocol and longtime leader with ICANN and the Internet Society, wrote, “On the positive side, these tools may prove very beneficial to research that needs to operate at scale … the discovery of hazardous asteroids from large amounts of observational data, the control of plasmas using trained machine-learning models and near term, high-accuracy weather prediction. The real question is whether we will have mastered and understood the mechanisms that produce model outputs sufficiently to limit excursions into harmful behavior. It is easy to imagine that ease of use of AI may lead to unwarranted and uncritical reliance on applications. … AI agents will become increasingly capable general-purpose assistants. We will need them to keep audit trails so we can find out what, if anything, has gone wrong and how and also to understand more fully how they work when they produce useful results. It would not surprise me to find that the use of AI-based products will induce liabilities, liability insurance and regulations regarding safety by 2035 or sooner.”

Esther Dyson, executive founder of Wellville and chair of EDventure Holdings, a famed serial investor-advisor-angel for technology startups and internet pioneer, wrote, “The future depends on how we use AI and how well we equip the next generation to use it. … AI can give individuals huge power and capacity that they can choose to use to empower others or to manipulate others. If we do it right, we will train children, all people, to be self-aware and to understand their own human motivations – most deeply, the need to be needed by other humans. … They also need to understand the motivations of the people and the systems they interact with. It’s as simple as that and as hard to accomplish as anything I can imagine.”

< UP NEXT… Experts Predict Change in 12 Essential Traits…

The following 25-page section exposes these experts’ opinions on each of the 12 essential traits they were asked to weigh. Each section includes a selection of direct quotes from several experts and a numerical breakdown on whether the respondents think change in this aspect of human activity will be mostly positive or mostly negative by 2035.